What We Have Learned About Putting AI to Work — In Government, In Classrooms, and In People

This week, I was part of a panel in a UK government AI learning journey on AI, and separately I was also asked to comment by a publication on the same subject.

I sat down to write the responses and realised something. The answers weren’t really about Singapore. They were about patterns we’ve seen across 300+ enterprise AI projects, 500+ AI engineers we’ve trained, and over 1,000 organisations we’ve engaged through AI Singapore’s (AISG) programmes since 2017.

So I’m sharing them here, expanded, for the AI-First Nation Insights community. If you’re a policymaker, a programme leader, or someone trying to get an AI initiative off the ground in your own organisation — this is what nine years of doing this in Singapore has taught us.

Where AI Actually Delivers — and Why Most Deployments Don’t

The fact is, the most tangible impact we’ve seen in Singapore’s public sector is unglamorous. Claims and case triage. Document understanding. Predictive maintenance for infrastructure. Anomaly detection in compliance. Assistive tools for frontline officers. In education, the genuine wins are personalised learning support and freeing teachers from administrative load — not replacing pedagogy.

These aren’t the projects that make headlines. They’re the ones that work.

What separates them from the rest comes down to three things, in our experience.

First, the organisation was AI-ready before the project started. Leadership commitment was real. The data actually existed in usable form. There was an engineering team capable of taking the solution into production.

Second, there was a clear ROI and a defined business owner. Not a CEO pet project. Not a vendor-driven proof-of-concept that nobody asked for.

Third, somebody owned the deployment and the change management. Because a model sitting in a Jupyter notebook helps nobody.

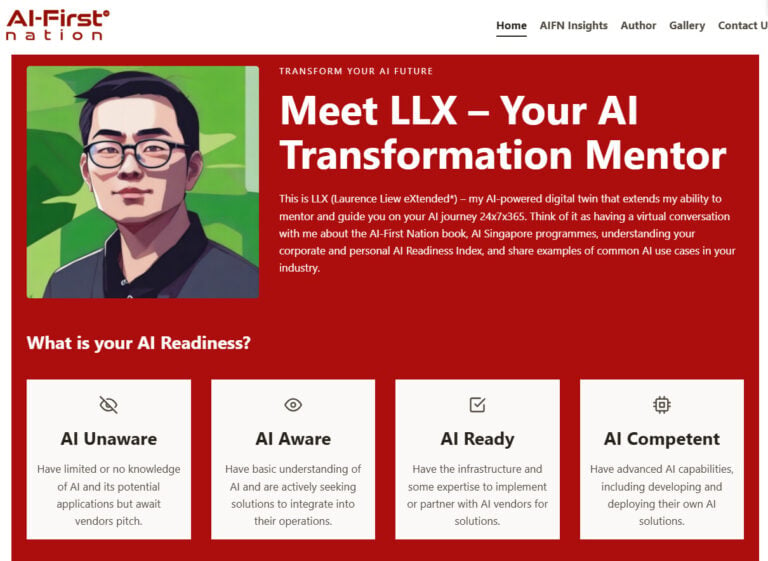

This pattern is exactly why we built the AI Readiness Index (AIRI) in 2019. After hundreds of engagements with companies, we noticed organisations needed a structured way to assess and improve their AI readiness — across five pillars: Organisational Readiness, Ethics & Governance, Business Value, Data Readiness, and Infrastructure Readiness. Without that diagnostic, everyone thinks they’re ready to do AI. With it, the conversation gets honest very quickly.

Across our 100E portfolio, the pattern is consistent: the AI is rarely what fails. It’s the readiness around it.

The Talent Flow Model: Why We Deliberately Over-Train

Singapore’s approach to AI in education and workforce development has been to think across the entire population, not just at the top. We’ve structured it as a flow.

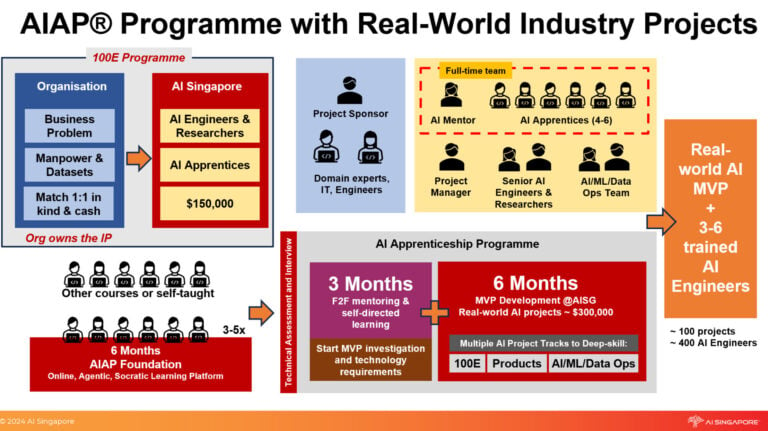

AI4E (AI for Everyone) reaches over 300,000 students, professionals, and civil servants for general literacy. AI for Industry (AI4I) builds intermediate proficiency for working professionals. The AI Apprenticeship Programme (AIAP) produces deep-skilled AI engineers. Each layer feeds the next.

What AIAP has shown us, after 20+ batches and 500+ graduates, is that the conventional assumptions about who can do AI are simply wrong.

Roughly 80% of our AIAP graduates do not have a Computer Science degree. They come from economics, psychology, biology, mechanical engineering, mathematics teaching, law, accountancy. Some were software developers; many were not. What matters is the ability to learn fast, comfort with data, and a genuine passion for solving problems.

Everyone can learn to program. Not everyone has a passion solving tough engineering and business problems with data.

The numbers tell the story. Around 70% of our apprentices receive a job offer before they graduate. 99% land one within six months — placed at DBS, Google, Meta, ST Engineering, and across Singapore industry. This is not an accident. AIAP is built around solving real industry problems via 100E, not synthetic exercises. Three months of deep-skilling, six months on a real enterprise project, with real stakeholders and real deployment expectations.

That is the difference between a certificate programme and an apprenticeship.

The deeper insight — and the one that policymakers in other countries often miss — is that we deliberately over-train. AISG produces more AI engineers than we can absorb, by design. The outflow into industry is the point, not a leakage problem.

In short: if your national AI talent strategy treats retention as the success metric, you’re measuring the wrong thing.

The Real Bottleneck Is Not the AI

When people ask me what’s stopping AI from scaling across public institutions, they often expect a technical answer. Compute. Data infrastructure. Algorithms. Talent shortage.

Honestly? The bottleneck is rarely the AI. It’s organisational readiness.

We see the same pattern repeatedly. An enthusiastic executive sponsor. Allocated budget. A confident claim that “we have the data.” Then the project starts, the executive disappears after kickoff, the data turns out to be scattered across fifteen spreadsheets and three legacy systems, and the IT team is too busy with other priorities to engage.

This is why our 100E project vetting process — before any commitment of resources — requires the organisation to build a baseline model with their own data. It’s a forcing function. If they cannot get to a baseline, they’re not ready, and we’d be wasting everyone’s time. We also require a 1:1 financial co-investment, which turns curiosity into commitment.

This is also why AIRI has become so important across the four maturity levels we observe in the wild. Most organisations sit at AI Unaware or AI Aware. Very few are genuinely AI Ready, fewer still AI Competent. The honest conversation begins with “where are you actually, today?” — not “where would you like to be?” Once an organisation knows it’s at Level 2 instead of Level 4, the next steps become obvious. The interventions become targeted. The roadmap becomes real.

The second bottleneck is post-deployment ownership. Many AI projects in the public sector struggle not at the modelling stage, but at the handover. There’s no engineering team on the receiving end to maintain the model, monitor drift, retrain on new data, or integrate updates into the workflow. Our response is simple: a capable engineering team on the sponsor side is a precondition for project approval, not a nice-to-have.

The third — and increasingly important — bottleneck is shadow AI. With ChatGPT and similar tools now in everyone’s hands, the risk is no longer that public servants don’t use AI. It’s that they use it without governance. Workforce-wide AI literacy through programmes like AI4E is no longer a “nice to have.” It is the mitigation layer.

What’s Actually Worked — Earned from Real Failures

A few patterns stand out from nine years of running national AI programmes. All of them earned from real failures rather than theoretical design.

Co-investment beats pure subsidy. When a public agency or company has skin in the game — even a modest cash match — engagement quality changes overnight. Free pilots get treated as free pilots. Co-funded projects get senior attention. Our 1:1 matching requirement on 100E is one of the most important things we ever did, even though it sometimes loses us projects upfront.

Real-word projects, don’t ship Jupyter notebooks or reports. The model that works for us is to pair our AI engineers and apprentices with the sponsor’s domain experts for the duration of the project — not deliver a consultant’s deck and walk away. Domain expertise and engineering have to fuse, not just exchange documents. We also hot-house our apprentices in our office rather than placing them at the sponsor’s site: the intensity of the hot-housing approach paired with the regular sprint-reviews with the project sponsors ensure deep learning and that the project stays on track.

Diagnose before you intervene. Targeted interventions only work when you know what you’re targeting. AIRI has now been used by thousands of organisations across Singapore and adopted internationally by GPAI as a global readiness benchmark. The reason it travels well is simple: every country, every sector, every organisation has the same 12-questions to answer before AI works for them. The answers differ. The questions don’t.

Build in ethics review from day one. We baked governance into the 100E project approval process from 2018, well before it was fashionable. By the time governance frameworks became globally fashionable, we were already operating along those lines. Retrofitting governance is far more painful than designing it in.

Be honest about what doesn’t work. Some projects we’ve terminated mid-flight because the sponsor lost their AI team, or the data turned out not to exist as claimed. That is not a programme failure — that’s the system working. Pretending every project succeeds is how you end up with the 70% AI-failure-rate statistics that get cited in the press.

The pattern across all of it: AI deployment in public systems is 20% modelling and 80% organisation, data, governance, and people.

The countries and institutions that internalise this will move. Those that keep treating AI as a procurement exercise will keep being disappointed.

Closing Thought

When I started with AI Singapore as employee #1 in 2017, the original KPI given to me was simple: deliver 100 AI projects to the industry. Everything else — AIAP, AI4E, LearnAI, AIRI, our work on global AI standards, GPAI co-chairing — emerged because we kept hitting walls and needed to fix them so we could deliver on that original number.

Programmes that look “comprehensive” from the outside often look that way because they were built to solve actual gaps, not because someone designed a master plan.

That is, in the end, the most important lesson I’d offer to any policymaker or programme leader trying to do this in their own context: start with a clear problem, build the bridge as you walk across it, and be ruthlessly honest about what’s not working.

The rest follows.